Progress Report

The Realization of an Avatar-Symbiotic Society where Everyone can Perform Active Roles without Constraint[2] Research and development on unconstrained spoken dialogue

Progress until FY2024

1. Outline of the project

We investigate automatic speech recognition and dialogue technologies to realize an autonomous spoken dialogue system with human-like hospitality, and develop flexible framework that allows Cybernetic avatars (CAs) to seamlessly switch between remote control dialogue and autonomous dialogue according to the operator's intentions and situation. The application scenario includes attentive listening, presentation, guide, job interviews, counseling, and customer service.

Thus, this group is responsible for spoken language dialogue processing in this project. Spoken dialogue systems have been advanced in these years, but they still primarily operate in quiet environments and are limited to uniform knowledge-level exchanges. Therefore, we focus on speech processing that functions robustly in real-world settings such as stores and public spaces, as well as language and dialogue processing capable of generating natural backchannels and empathetic responses. Moreover, we are exploring dialogue management and summarization for seamless switch to the human-controlled CA system, and developing a system integrating these technologies and a CG-CA platform (Figure.1).

2. Outcome so far

Speech processing.

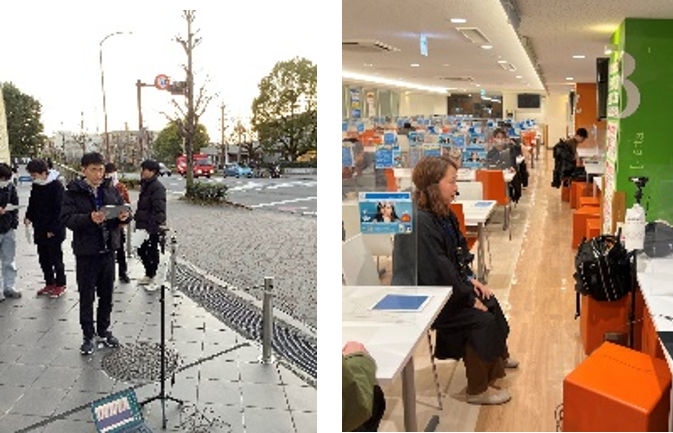

- (1) Whereas the conventional systems are designed for a single user, we have developed a spoken dialogue system that can handle multiple users at a time (Figure.2). It was demonstrated at a field trial with a satisfactory performance in facilitating smooth dialogue involving groups such as families.

- (2) Whereas the quality assessment of speech synthesis had been conventionally conducted manually, we have developed an automatic assessment system, which won the international contest VoiceMOS Challenge.

Natural language and dialogue processing.

- (1) We have developed a speech-to-speech model (J-Moshi) that can interpret and respond to user speech in real-time without converting them into text. We also improved the natural backchannel generation model with a large pretrained model.

- (2) We constructed a largest-scale chat corpus involving conversations among family members or acquaintances in order to model multi-party dialogue

Integrated system

- (1) We designed highly naturalistic CG-CAs (Figure.3) and built a software environment that can operate both autonomously and remotely. Moreover, we also investigated generation of the CG-CA’s movement from the operator’s speech and also reaction to the user’s speech.

- (2) We have developed a system that conducts attentive listening for five people in parallel and simultaneously.

- (3) We have built a system that provides explanations and guidance to six people simultaneously and in parallel. It was used for a field trial at an aquarium for one month (Figure.4), confirming that two operators were sufficiently capable of managing the interactions effectively.

3. Future plans

The individual modules of speech processing and dialogue processing have been developed to a practical level to be deployed in the integrated system such as conversational robots and CG-CAs. In the future, we will explore multi-party scenarios, envisioning applications that support discussions and interpersonal relationship-building.