-

Project Overview Home Project Overview

Project Overview Ken-ichi Kawarabayashi, Research Director

Ken-ichi Kawarabayashi, Research Director(Professor, National Institute of Informatics)

Research Period: October 2012 to March 2018

Large-scale networks are now used by nearly a billion users and form an indispensable part of modern society. Such large networks include the web structures of the Internet, and social networks like Facebook and Twitter. All these networks are expanding rapidly and are expected to grow to a scale of more than 10 billion users in the near future.

The growth in the volume of information on these expanding networks exceeds the speed of hardware evolution. Current data algorithms will soon be unable to process the volume of information for so called “big data” at a practical speed, particularly for a network on the scale of 10 billion users (1010-class network). Thus, development of high-speed data processing algorithms is an urgent task.

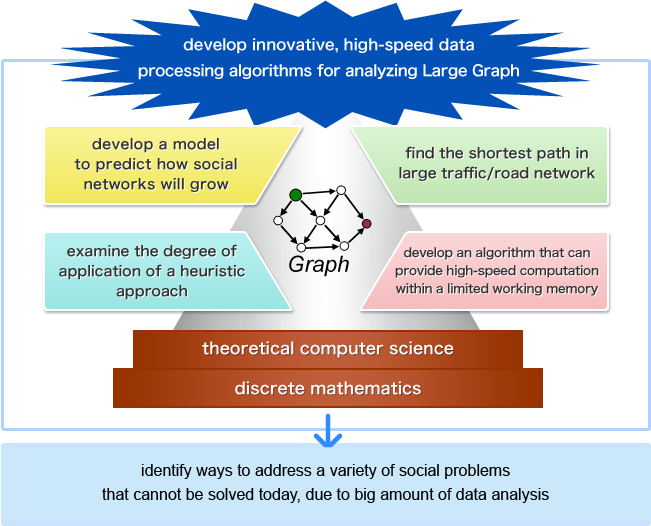

With this backdrop, the Kawarabayashi Large Graph Project aims to develop such high-speed data processing algorithms. In this project, we understand a large-scale network to mean a structure with nodes connected by edges; in other words, we regard the overall network as a massive graph with more than 10 billion nodes. Based on this, the project endeavors to establish algorithms by analyzing this massive graph using cutting-edge mathematical approaches, including theoretical computer science and discrete mathematics.

The project is working to achieve the following four targets:

(1) Shorten the route search processing time by developing a preprocessing algorithm based on up-to-date theoretical studies on finding the shortest path between two points in large traffic/road networks and thereby establish data structures of a size that can be practically processed.

(2) Develop a model to predict how web structures and social networks will grow in the near future, something that is currently difficult to achieve as their changes and expansion are so rapid.

(3) Develop an algorithm that can provide high-speed computation in environments with limited working memory, such as mobile phones and PCs, where High Performance Computers (HPC) are not available for data analysis.

(4) Within the frame of large-scale network analysis, theoretically examine the degree of application of a heuristic approach (experience-based techniques to gain a reasonable solution even though it may not be the best), which we believe will contribute to increasing the speed of algorithm processing.

Through such research, the project aims to establish new mathematical theories, as well as to demonstrate the effectiveness of theoretical studies in network analysis. Further, we are endeavoring to identify ways to address a variety of problems that cannot be solved by conventional methods due to the amount of data to be processed. This should also lead to solutions for the traffic network and the web structure analysis, bioinformatics, and contribute to analyses to identify efficient information transmission methods in disasters.

The project is also expected to contribute to realizing an environment where individuals can gain the optimum results and effects from analysis of massive data volumes, such as sensing data, and move towards delivering its strategic target: “development of core technologies to realize an information environment in harmony with human beings”

Research Groups